Supplying and Demanding AI Skills

AI for DC

Hi

Welcome (back) to The Prompt. AI isn’t well understood, but we learn a lot in our work that can help. In this newsletter, we share some of those learnings with you. If you find them helpful, make sure you’re signed up for the next issue.

[Insight] AI skilling demand and deserve

Employer demand for AI skills is clear – they’re actively paying and hiring for AI fluency. A large-sample AWS-Access Partnership survey found 73% of employers prioritize hiring AI‑skilled workers. PwC’s 2025 Global AI Jobs Barometer shows that roles listing AI skills carry an average 56% wage premium, nearly double a year earlier, and job ads requiring AI skills rose 7.5% year‑over‑year even as total postings fell 11.3%. Chief Information Officers are backing this with budget: IDC projects that $337 billion will be spent worldwide in 2025 on technology supporting AI strategies, with total AI spending doubling to $632 billion by 2028.

Just as important, we believe that workers deserve the training and skills to use AI effectively. That’s why we’re building OpenAI Certifications and the OpenAI Jobs Platform, and working with iconic employers, Main Street businesses and state government offices across the US to get AI skills training to as many Americans as possible, fast. Access to AI only really works for people if they know how to use it to unlock economic opportunity for themselves.

In our new report on Jobs in the Intelligence Age, we propose some general best practices on both the demand and “deserve” side:

In practice, organizations should budget paid training time, offer micro‑credentials tied to real workflows, and provide a simple ladder from basic fluency to more sophisticated operations. This people-centric approach ensures that workers can delegate tasks to AI confidently and review its output responsibly while remaining accountable for outcomes.

Communities should foster public-private partnerships among employers, community colleges, universities, and government agencies to build a strong pipeline of AI-skilled workers through continuous curriculum development, training, and job-matching.

Strong collaboration with unions can help scale AI literacy quickly and help ensure that employees are driving the implementation to complement their work. Co-designing training programs with unions can help to reflect the realities of the workplace, and optimize for workplace safety.

For more, see our full report on Jobs in the Intelligence Age.

[AI Economics] Lessons from past re-skilling efforts

To help inform OpenAI Certifications and the Jobs Platform, our Economic Research team dove into recent research to see what lessons can be learned from previous re-skilling initiatives to make sure we’re adapting based on what has and hasn’t worked in the past. Two takeaways stood out:

Need for a clear match with labor market and worker needs: It’s important to align training with real job opportunities. Many past programs were disconnected from local employer needs and in-demand skills. This remains an acute risk with AI, as skills desired by employers may change more rapidly and is part of why our Certifications program will be developed and implemented in conjunction with employer partners.

Data collection and analysis of success is essential: Public training systems often lack transparency and measurable outcomes. In the US, key performance data such as program completion rates, job placement, and wage gains are missing for over 75% of workforce training programs, making it nearly impossible for workers or employers to assess value. The sector is also highly fragmented, with minimal system-level data and no clear understanding of what drives successful reskilling efforts.

Beyond broad program lessons, new research gives us a closer look at what happens to workers whose jobs are directly affected by AI. One large study tracked more than 1.6 million cases of people in US federal job training programs over a decade, linking their training choices to earnings and AI exposure. It found that workers from AI-impacted jobs did see pay increases (about $1,470 more per quarter). But those who retrained into fields with less AI exposure tended to earn more than those who tried to double down on AI-related skills.

For us at OpenAI, the key lesson from all of this research is that re-skilling initiatives must be employer-aligned, embedded into real work contexts, transparent in outcomes, and personalized at scale. Unlike traditional one-off retraining efforts, AI-enabled tools can provide adaptive, just-in-time learning experiences, micro-credentials, and on-demand coaching that map directly to evolving skill needs.

— Rachel Brown, OpenAI Economic Research

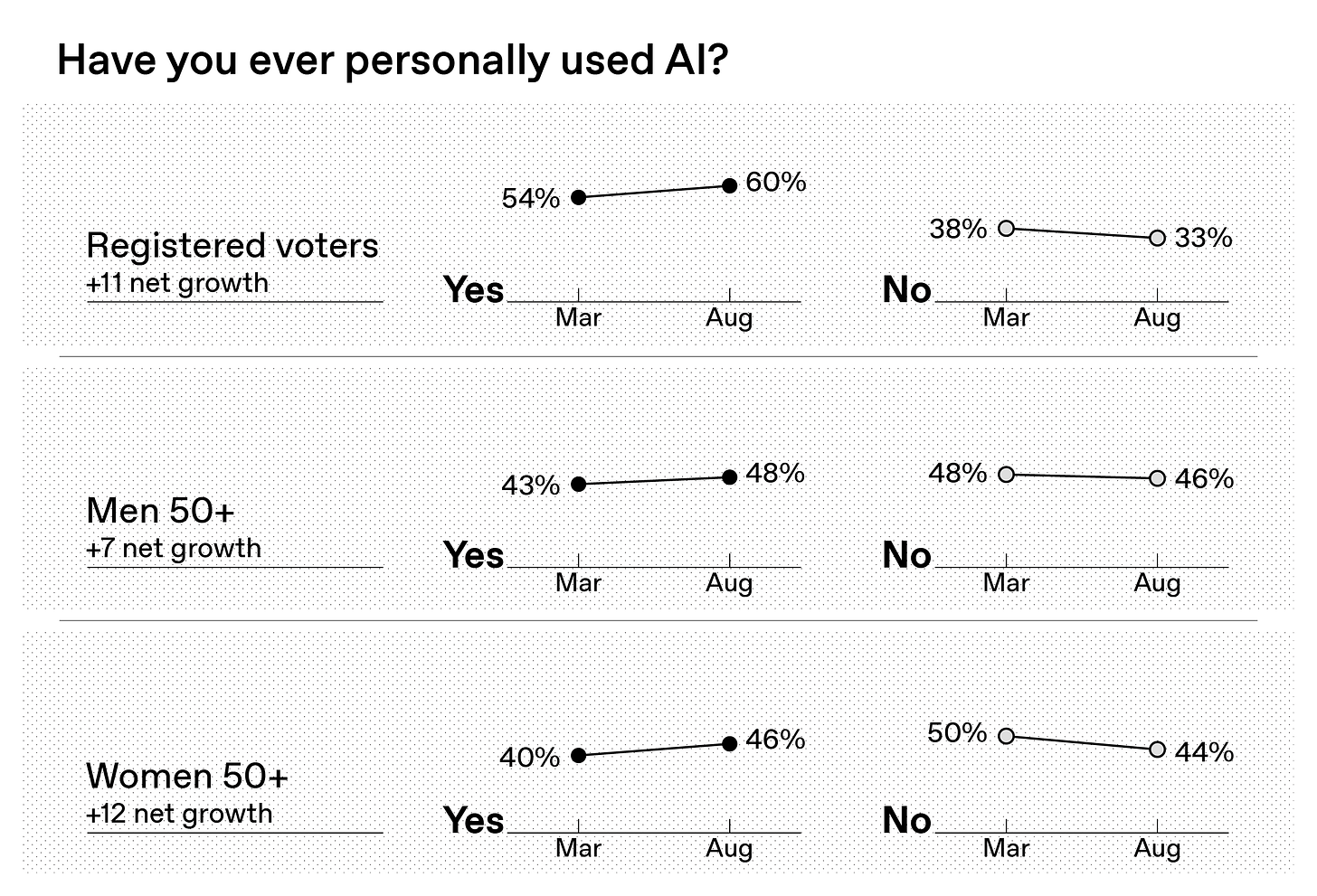

[Data] Tracking the growth in AI use

New findings show the increase of Americans using AI – and where that growth is mostly coming from.

Back in March, according to a nationwide KAConsulting survey we commissioned, 54% of registered voters said they personally use AI, versus 38% who said they don’t. Five months on, on that exact same question, KAConsulting finds that 60% of voters personally use AI, compared with 33% who don’t (Aug. 8-13, 800 national registered voters, +/- 3.0%). That jump is outside the poll’s margin of error:

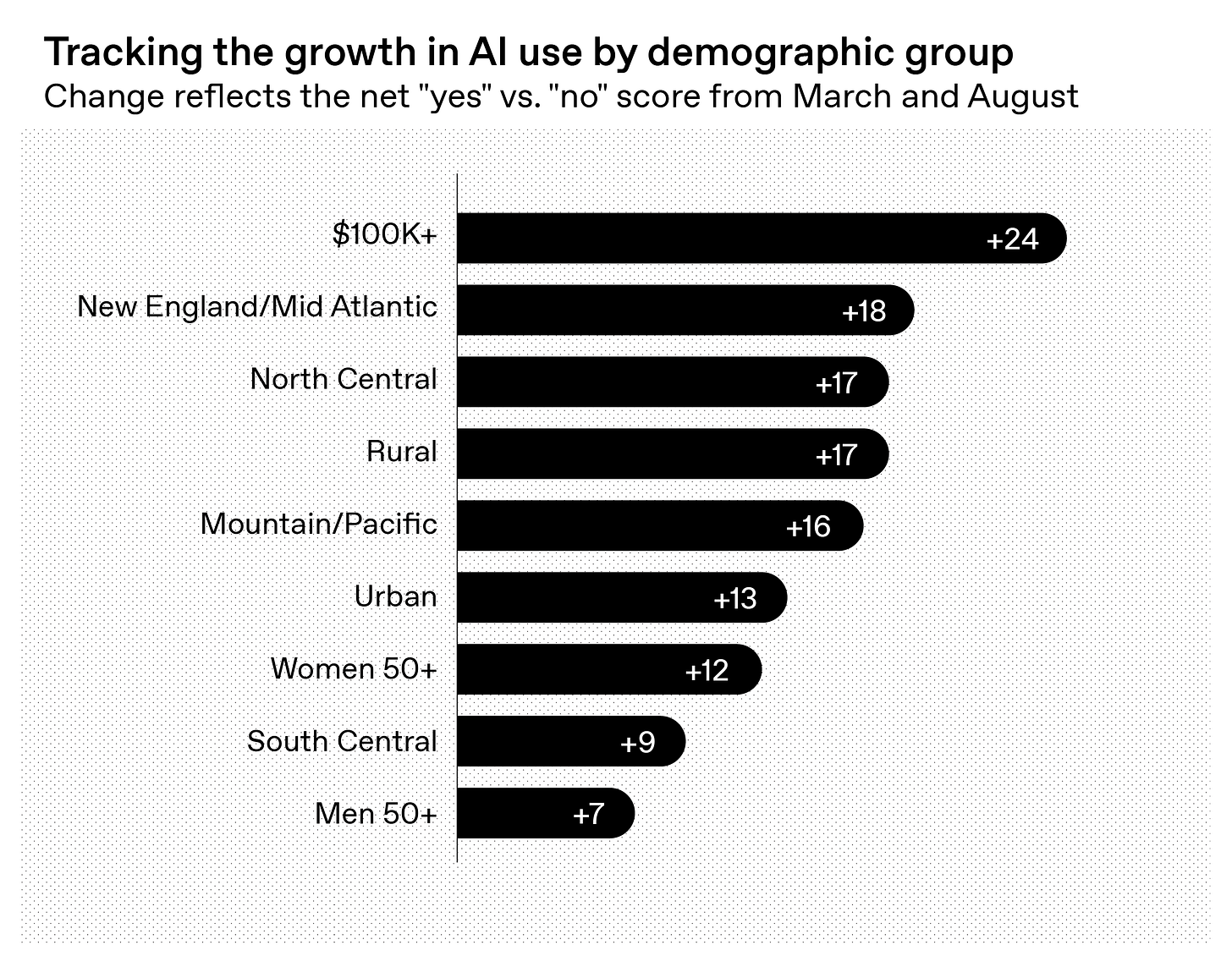

So which demographic subgroups have moved the most over the past five months? According to the survey, it’s, yes, those who earn more than $100K per year (+24) and urban residents (+13) – but it’s also those who live in rural areas (+17) and women (+12). Take a look at the shifts here:

[About] OpenAI Forum

Explore past and upcoming programming by and for our community of more than 30,000 AI experts and enthusiasts from across tech, science, medicine, education and government, among other fields.

8:00 PM – 9:00 PM EDT on Sep 18

[Disclosure]

Graphics created by Base Three using ChatGPT.

‘AI fluency’ sounds great, but it’s still so broad it could mean anything. Are we talking:

• Prompt literacy and structured prompting?

• Fine-tuning vs. RAG vs. embeddings — knowing when to use which?

• System design skills like chaining models, using vector DBs, and orchestrators (LangChain, LlamaIndex)?

• Understanding RLHF, alignment tradeoffs, and how safety layers interact with base models?

• Knowledge of recursive systems — feedback loops, output monitoring, and reinforcement collapse risks?

• Governance literacy: privacy, model evals, interpretability tools (e.g., Evals, SHAP, TCAV)?

• Or just being able to open ChatGPT and not panic?

All of those are wildly different skills with wildly different stakes — lumping them under ‘fluency’ flattens what people actually need to learn.”